When I was at the INDVIL workshop about data visualisation on Lesbos a couple of weeks ago, everybody kept citing Donna Haraway. “It’s the ‘god trick’ again,” they’d say, referring to Haraway’s 1988 paper on Situated Knowledges. In it, she uses vision as a metaphor for the way science has tended to imagine knowledge about the world.

The eyes have been used to signify a perverse capacity–honed to perfection in the history of science tied to militarism, capitalism, colonialism, and male supremacy–to distance the knowing subject from everybody and everything in the interests of unfettered power. (p. 581)

Haraway connects this to what I would call machine vision (“..satellite surveillance systems, home and office video display terminals, cameras for every purpose from filming the mucous membrane lining the gut cavity of a marine worm living in the vent gases on a fault between continental plates to mapping a plantery hemisphere elsewhere in the solar system..”) and states that these technologies don’t just pretend to be all-seeing, objective and complete, they also make this seem ordinary, part of everyday life:

Vision in this technological feast becomes unregulated gluttony; all seems not just mythically about the god trick of seeing everything from nowhere, but to have put the myth into ordinary practice. (p. 581)

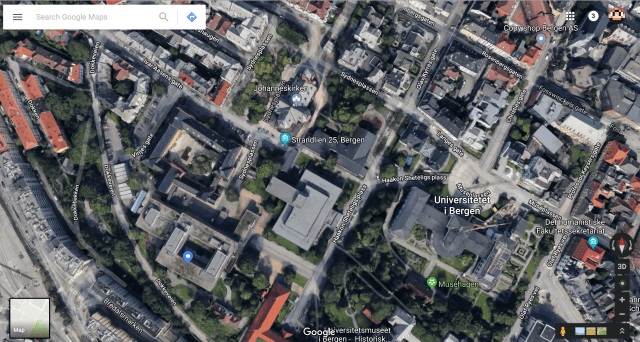

Of course, “that view of infinite vision is an illusion, a god trick” (p. 582). But it’s not an illusion that we seem to have escaped since 1988. Google’s satellite maps, for instance, have that lovely feel of “seeing everything from nowhere.” I heard Rob Tovey present a fascinating paper about this at the Post Screen Festival in Lisbon a couple of years ago (“God’s Eye View: The Satellite Photography of Google“, 2016), noting not only the “god trick,” but also the mechanics of how these images are created from multiple photographs using specific projection techniques rather than others. A map is far from objective.

Haraway’s conclusion is that the only way of achieving any kind of objectivity in science, and for her this is a feminist point, though valid for all science, is by admitting that knowledge is partial and situated. Perhaps something like a 360 degree photosphere, taken by an individual like myself using Google’s Street View app on my phone, could be classified as an example of a visual position that is partial?

If you click through that screenshot to see the way Google displays my photo, you’ll see you can drag it around to see everything I saw in every direction.

Well, almost everything I saw. If you look down, you won’t see my feet.

Google edited them out.

The knowing self is partial in all its guises, never finished, whole, simply there and original; it is always constructed and stitched together imperfectly, and therefore able to join with another, to see together without claiming to be another. Here is the promise of objectivity: a scientific knower seeks the subject position, not of identity, but of objectivity, that is, partial connection.

Those 360 images are certainly constructed and stitched together, and have a more specific standpoint or position, maybe even an implicit subject position from which you see. The glitches in the stitching together of the images remind us that they are imperfect, partial representations.

And yet the human is edited out.

Knowledge from the point of view of the unmarked is truly fantastic, distorted, and irrational. The only position from which objectivity could not possibly be practiced and honoured is the standpoint of the master, the Man, the One God, whose Eye produces, appropriates, and orders all difference. (..) Positioning is, therefore, the key practice in grounding knowledge organised around the imagery of vision. (p. 587)

I wonder whether today it is Google and technology, rather than the patriarchal male master, whose “Eye produces, appropriates, and orders all difference.”

And of course, as the scholars at our workshop about data visualisation pointed out, data visualisations are another way in which information is presented as objective, as seen from a disembodied, neutral viewpoint. The kind of viewpoint that doesn’t exist.

Above all, rational knowledge does not pretend to disengagement: to be from everywhere and so nowhere, to be free from interpretation, from being represented, to be fully self-contained or fully formalisable. (p. 590)

And of course, data visualisations tend to show the big picture. It’s nicely organised, you can see the patterns, and there are no “troubling details,” as Johanna Drucker puts it in Graphesis (2014).

That’s the god trick alright.

The View from No Body – SDS 410: Capstone in Statistical & Data Sciences

[…] her actual paper, Situated Knowledges (1988). Since it’s a bit long, I decided to read this article by Dr. Walker Rettberg where they summarize certain points. The first quote really caught my […]